5. Create your own Processing (Part 2)

Now that you created your Processing on Picsellia, let's link this with your code by building the Docker Image that will be used to launch your scripts automatically.

To easily follow the process, you can go to this Github repository and use it as a template for your own Processings!

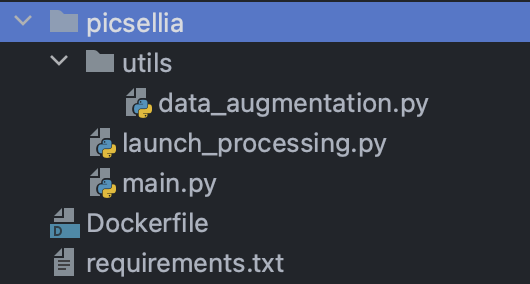

Repository structure

The main goal when you create a Processing is to package your code in a Docker Image containing all your requirements that will run alongside code from us that will capture the logs so you can see them live on the platform.

Let's have a look at the structure of our repo

It's pretty simple here is the function of each file:

- The

Dockerfilecontains all the instruction to build the image and launch ourlaunch_processingscript requirements.txtcontains the packages needed to run our code, you can add any package that you like and need there!launch_processing.pyis the entrypoint of our Processing, it's a file that you should not touch that will launch a subprocess with you actual code that you createdmain.pyis your script, this is where you can retrieve the information from your Processing, your Dataset and perform the actions that you like (for example data-augmentation)- The

utilsfolder is there to put all your sub scripts, functions, classes that will be imported by your script, it's better that you put everything there so you are sure you can import it frommain.py(although everything could also be in the main file, that's your choice)

FROM python:3.8-bullseye as base

ENV PYTHONDONTWRITEBYTECODE 1

ENV PYTHONUNBUFFERED 1

ARG DEBIAN_FRONTEND=noninteractive

RUN pip3 install --no-cache-dir picsellia

COPY requirements.txt .

RUN pip3 install -r requirements.txt

COPY . /

CMD ["/picsellia/launch_processing.py"]

ENTRYPOINT ["python3"]

RUN chown -R 42420 /picselliaThe main things to understand here is that:

- The base image is a basic and lightweight Python Image, you can change this to whatever is needed to run your script

- We install the Picsellia Python package (mandatory step to communicate with Picsellia)

- We install all the packages from our

requirements.txtfile - We set the entrypoint to our

launch_processing.pyfile, which means that the script will be launched automatically - We give write permissions on the whole directory

We will not go over thelaunch_processing.pyfile in this tutorial, just leave it this way and it will run your script and capture its stdout

Now let's see our data-augmentation script

Initialization

from picsellia import Client

from picsellia.sdk.dataset import DatasetVersion

from utils.data_augmentation import simple_rotation

import os

api_token = os.environ["api_token"]

organization_id = os.environ["organization_id"]

job_id = os.environ["job_id"]

client = Client(

api_token=api_token,

organization_id=organization_id

)

job = client.get_job_by_id(job_id)

context = job.sync()["datasetversionprocessingjob"]

input_dataset_version_id = context["input_dataset_version_id"]

output_dataset_version = context["output_dataset_version_id"]

parameters = context["parameters"]

input_dataset_version: DatasetVersion = client.get_dataset_version_by_id(

input_dataset_version_id

)You usually shouldn't have to change the first part of the script until the contextvariable definition. Here we just initialize the Picsellia client, fetch the Job that is running on Picsellia and associated to your Processing and retrieve its context.

The context is very important because it contains the information to:

- retrieve the Dataset you are running your Processing on

- retrieve the target dataset where our new images will lie

- retrieve the potential parameters of our Processing

Then we fetch the Dataset thanks to the id we got from the context.

input_dataset_version.download("data")

file_list = [os.path.join("data", path) for path in os.listdir("data")]

target_path = "rotated_data"

simple_rotation(file_list, target_path=target_path)

new_file_list = [os.path.join(target_path, path) for path in os.listdir(target_path)]

datalake = client.get_datalake()

data_list = datalake.upload_data(new_file_list, tags=["augmented", "processing"])

output_dataset: DatasetVersion = client.get_dataset_version_by_id(

output_dataset_version

)

output_dataset.add_data(data_list)

If you are already familiar with our Python SDK, you should read this last piece easily.

In short what it does is:

- It download the image from our input Dataset

- rotate each files thanks to the function in

data_augmentation.pyand save them in a new directory - upload every rotated images in the Datalake

- add all those data to the Dataset that has been created when launching the Processing

from PIL import Image

from pathlib import Path

import os

def simple_rotation(filepaths: list, target_path: str):

for path in filepaths:

filename = Path(path).name

image = Image.open(path)

rotated_image = image.rotate(45)

rotated_image.save(os.path.join(target_path, filename))And finally here is our little function that rotate our images.

Now that you know how to organize your script to run your own Processing, let's build this so it becomes available on Picsellia and you can run it anytime

Build and push Docker image

Using the public Docker Hub

If you don't have a private Docker registry, we suggest you push your Docker images to the Docker Hub.

To do this, just login with your usual credentials.

Then, open a shell in the processingfolder of the repository and enter the following command

docker build . -t <:image_name>:<tag>For example, if I wanted to push to the Picsellia Docker Hub and I want to name my image processing-rotate, my command would look like this

docker build . -t picsellpn/processing-rotate:1.0Then proceed to push your images with the following command

docker push <:image_name>/<:tag>Using a Picsellia private Docker Registry

If you are have a Picsellia Enterprise account, you can have access to a Private Container Registry hosted by us.

You can ask us for your credentials, and we will provide you with the following:

- A

Registry URL, something likel76gd76h.gra7.container-registry.ovh.net - A

username - A

password

First, you have to login to your private registry with the following command, and enter your usernameand passwordwhen asked to

docker login REGISTRY_URLNow build your image using the following command

docker build . -t <:REGISTRY_URL>/picsellia/<:image_name>:<:tag>

Herepicselliais your username in your private registry so DO NOT change or remove it or it will not work

For example, if I wanted to build my processing-rotateimage I would do the following

docker build . -t l76gd76h.gra7.container-registry.ovh.net/picsellia/processing-rotate:1.0And finally you can push your image to the private registry

docker push l76gd76h.gra7.container-registry.ovh.net/picsellia/processing-rotate:1.0And tada 🎉 You now have a running Docker Image ready to use in your Picsellia private registry!

Updated 10 months ago