📈 Experiment Tracking

The second main feature on Picsellia is the ability to track all the experiments that will lead you to the best version of your models. Let's discover it!

As it is a whole discipline in itself, we propose you a fully functional experiment tracking system that allows you to elevate your workflow and finally achieve top performances. When training AI models, you will perform a lot of different experiments with different pre-trained models, dataset versions, sets of hyper-parameters, evaluation techniques, etc.

This is a very iterative process that is time intensive. Because once your training script is ready, you will spend a lot of time iterating with all the different variables your final models depend on, and launching your script over those variables until you are satisfied with its performances.

One big challenge that you will face as a data scientist, AI researcher, or engineer is that you must be able to store all the important metrics for each experiment, store the principal files and finally, compare your experiments to find the best one.

This whole process is called Experiment Tracking and you can do it seamlessly on Picsellia! In this section, you will learn how 😊

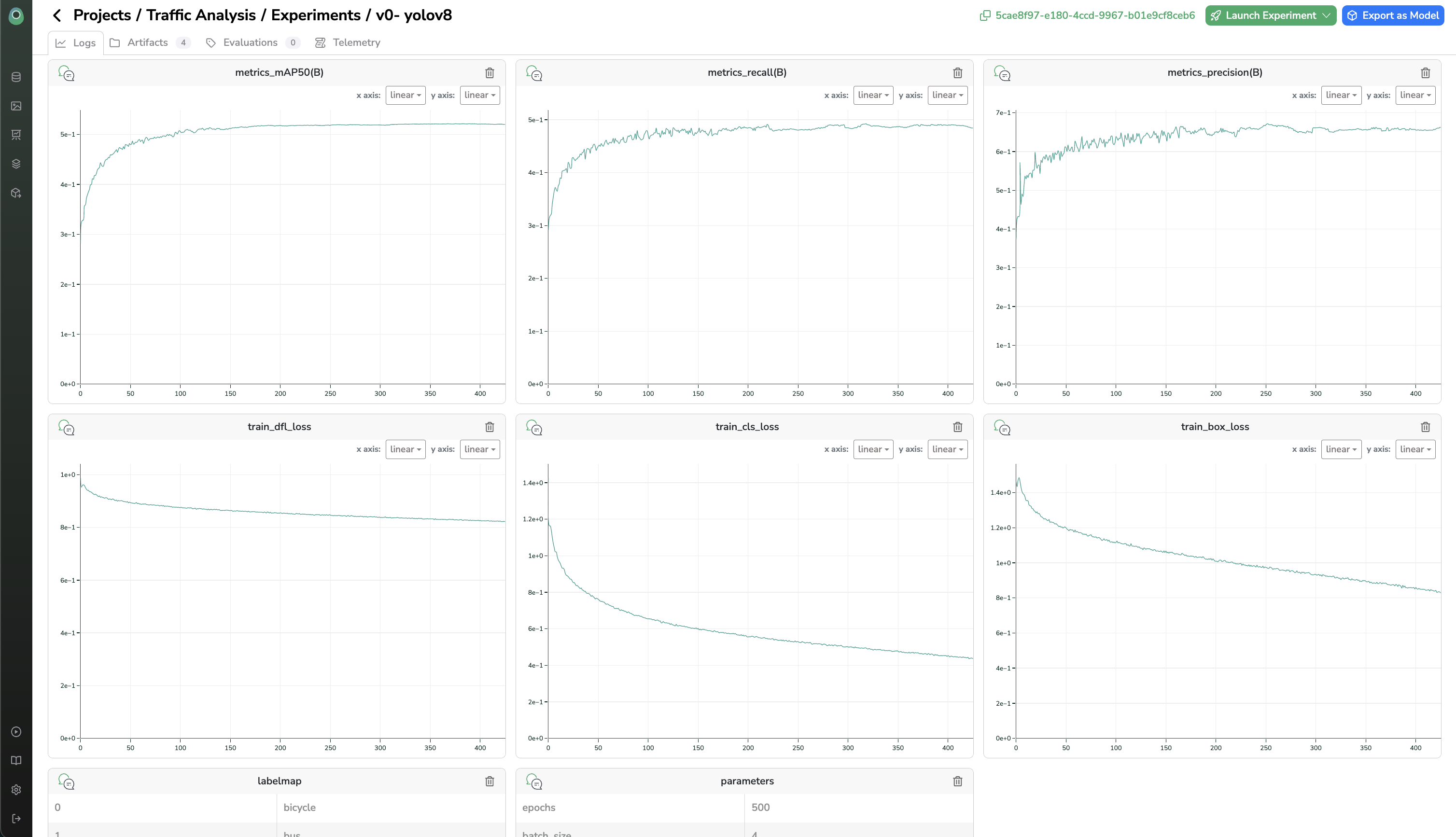

Logs

To log something means that you will save the value of a parameter, metric, or image and that you and your team will be able to visualize it later in the platform in a practical way such as an interactive plot/chart.

from picsellia.types.enum import LogType

experiment.log([1,2,3], name="awesome-curve", type=LogType.LINE)

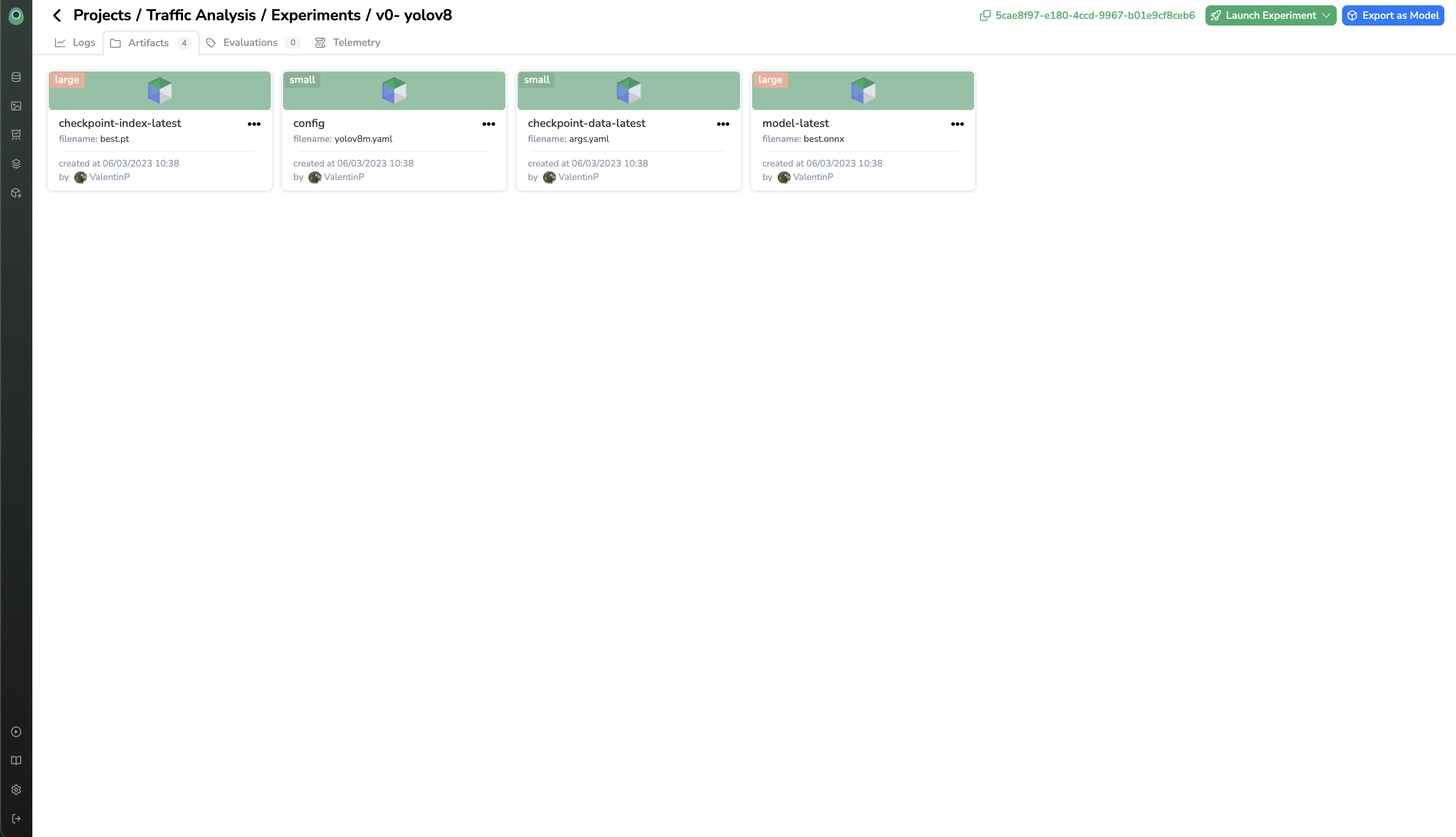

Artifacts

Artifacts are all the files that you will generate when you run an experiment such as model weights, checkpoints, configuration files, etc.

If you don't use a proper experiment tracking system, at a certain point you will have trouble organizing the different versions of your files and sharing it with your team.

That's why we allow you to store any file on our platform at any time during your experiment so you are sure to find it later and be able to retrieve the files of your best experiment.

experiment.store("model-latest", "path/to/file", zip=True)

Updated 9 months ago